Something that’s important for every website is a site monitor, and a great tool for getting started is Jetpack Monitor. It’s free and sends out an email notification whenever downtime is detected. If you want more control and flexibility over downtime notifications, I recommend using a paid site monitoring service or do your own site monitoring.

Back in March 2018 I built a crude bash script site monitor. It makes a curl request to each of my customer’s WordPress home_url. If the response code is abnormal, anything other 200, then an issue is logged like so: Response code 404 for https://anchor.host. This wasn’t a complete site monitoring solution, however it worked as a early concept.

Attempting to monitor more then one site was painfully slow. I was able to speeds things up slightly by running multiple curl commands in batches but that still wasn’t very great. What I really needed was concurrency, which would allow the monitor to run continuous with a set maximum number of checks running in parallel.

Then I discovered xargs has built-in support for concurrency!

A big thanks goes to this fantastic blog post: http://coldattic.info/post/7/. It’s old but everything still applies. In order to implement concurrency with xargs I first broke apart my site monitor script into two parts: (1) a dumb check and (2) a smart handler. I’ll start off with the dumb check script named monitor-check.sh.

#!/bin/bash

#

# Monitor check on a single valid HTTP url.

#

# `monitor-check.sh`

#

# Vars

user_agent="captaincore/1.0 (CaptainCore Health Check by CaptainCore.io)"

url=$1

run_command () {

# Run the health check. Return http_code and body.

response=$(curl --user-agent "$user_agent" --write-out %{http_code} --max-time 30 --silent $url)

# Pull out http code

http_code=${response:${#response}-3}

# Pull out body

body=${response:0:${#response}-3}

# valid body contains </html>

html_end_tag=$(echo -e "$body" | perl -wnE'say for /<\/html>/g')

# check if </html> found

if [[ $html_end_tag == "</html>" ]]; then

html_end_tag_check="true"

else

html_end_tag_check="false"

fi

# Build json for output

read -r -d '' json_output << EOM

{

"http_code":"$http_code",

"url":"$url",

"html_valid":"$html_end_tag_check"

}

EOM

echo $json_output

}

run_command $1This script is really basic. It requires a valid url supplied like this monitor-check.sh https://anchor.host. It then runs a check and outputs useful JSON {"http_code":"200", "url":"https://anchor.host", "html_valid":"true" }. It only checks one site and doesn’t do anything with the data. This is precisely what we can use for parallelizing.

Wrapping up the simple script with xargs for parallelizing.

Xargs is like a big expanding tool. You can feed in a list of anything through xargs to other commands which then expands into separate commands. It can be really powerful and complex. To explain take a look at the following code sample.

urls_to_check="https://anchor.host https://captaincore.io https://austinginder.com"

echo $urls_to_check | xargs -P 15 -n 1 monitor-check.shThis will expand the URLs contained in the bash variable $urls_to_check into 3 separate commands monitor-check.sh https://anchor.host, monitor-check.sh https://captaincore.io and monitor-check.sh https://austinginder.com. The argument -p 15 means these three commands will run concurrently up to a maximum of 15 processes. Due to concurrences it’s common that the results will be in a different order. That’s actually how you know they’re running concurrently as the results are displayed in the order each site check is completed:

{ "http_code":"200", "url":"https://austinginder.com", "html_valid":"true" }

{ "http_code":"200", "url":"https://anchor.host", "html_valid":"true" }

{ "http_code":"200", "url":"https://captaincore.io", "html_valid":"true" }The second part of the script, the smart handler, passes the output from above to a smart PHP file which counts up the errors and builds out an email if needed. PHP does all of the heavy work whereas bash does whatever PHP tells it to. That keeps the scripting running lightning fast. On a really cheap VPS this script can check 800+ sites in less than 2 minutes.

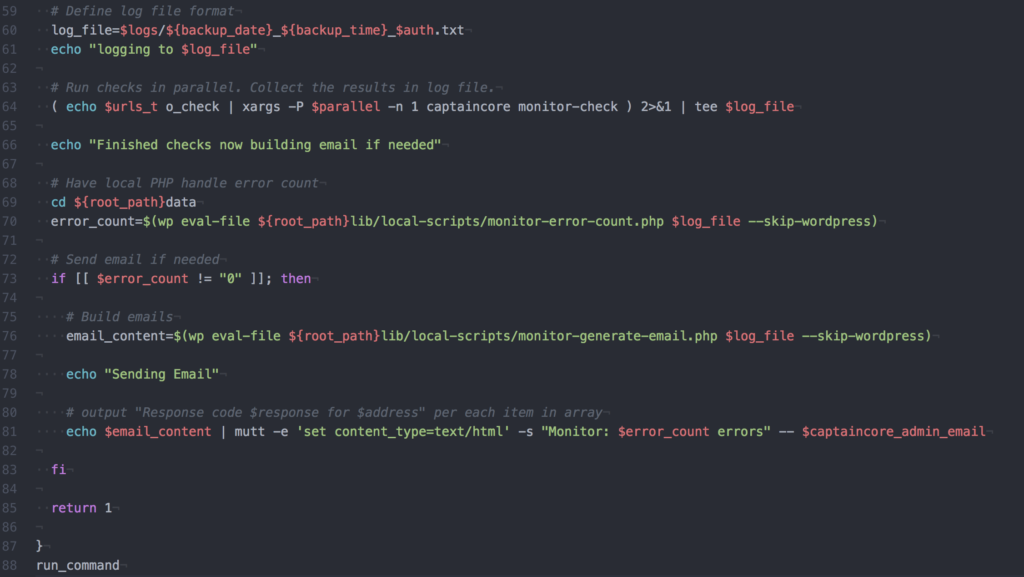

monitor.sh

#!/bin/bash

#

# Monitor check

#

# `monitor.sh`

#

run_command () {

# Send errors to

captaincore_email_to="support@changeme.com"

# URLs to check

urls_to_check="https://anchor.host https://captaincore.io https://austinginder.com"

# Assign default parallel if needed

if [[ $parallel == "" ]]; then

parallel=15

fi

# Generate random auth

auth=''; for count in {0..6}; do auth+=$(printf "%x" $(($RANDOM%16)) ); done;

# Begin time tracking

overalltimebegin=$(date +"%s")

backup_date=$(date +'%Y-%m-%d')

backup_time=$(date +'%H-%M')

# Define log file format

log_file=Logs/${backup_date}_${backup_time}_$auth.txt

echo "logging to $log_file"

# Run checks in parallel. Collect the results in log file.

( echo $urls_to_check | xargs -P $parallel -n 1 captaincore monitor-check ) 2>&1 | tee $log_file

echo "Finished checks now building email if needed"

# Have local PHP handle error count

error_count=$(wp eval-file monitor-error-count.php $log_file --skip-wordpress)

# Send email if needed

if [[ $error_count != "0" ]]; then

# Build emails

email_content=$(wp eval-file monitor-generate-email.php $log_file --skip-wordpress)

echo "Sending Email"

# output "Response code $response for $address" per each item in array

echo $email_content | mutt -e 'set content_type=text/html' -s "Monitor: $error_count errors" -- $captaincore_email_to

fi

return 1

}

run_command

monitor-error-count.php

<?php

$errors = array();

$contents = file_get_contents( $args[0] );

$lines = explode("\n", $contents);

foreach ($lines as $line) {

$json = json_decode( $line );

# Check if JSON valid

if (json_last_error() !== JSON_ERROR_NONE ) {

continue;

}

$http_code = $json->http_code;

$url = $json->url;

$html_valid = $json->html_valid;

# Check if HTML is valid

if ( $html_valid == "false" ) {

$errors[] = "Response code $http_code for $url html is invalid\n";

continue;

}

# Check if healthy

if ( $json->http_code == "200" ) {

continue;

}

# Check for redirects

if ( $json->http_code == "301" ) {

continue;

}

# Append error to errors for email purposes

$errors[] = "Response code $http_code for $url\n";

}

echo count($errors);monitor-generate-email.php

<?php

$errors = array();

$warnings = array();

$contents = file_get_contents( $args[0] );

$lines = explode("\n", $contents);

foreach ($lines as $line) {

$json = json_decode( $line );

# Check if JSON valid

if (json_last_error() !== JSON_ERROR_NONE ) {

continue;

}

$http_code = $json->http_code;

$url = $json->url;

$html_valid = $json->html_valid;

# Check if HTML is valid

if ( $html_valid == "false" ) {

$errors[] = "Response code $http_code for $url html is invalid\n";

continue;

}

# Check if healthy

if ( $json->http_code == "200" ) {

continue;

}

# Check for redirects

if ( $json->http_code == "301" ) {

$warnings[] = "Response code $http_code for $url\n";

continue;

}

# Append error to errors for email purposes

$errors[] = "Response code $http_code for $url\n";

}

# if errors then generate html

if ( count($errors) > 0 ) {

$html = "<strong>Errors</strong><br /><br />";

foreach ($errors as $error) {

$html .= trim($error) . "<br />\n";

};

$html .= "<br /><strong>Warnings</strong><br /><br />";

foreach ($warnings as $warning) {

$html .= trim($warning) . "<br />\n";

};

echo $html;

}