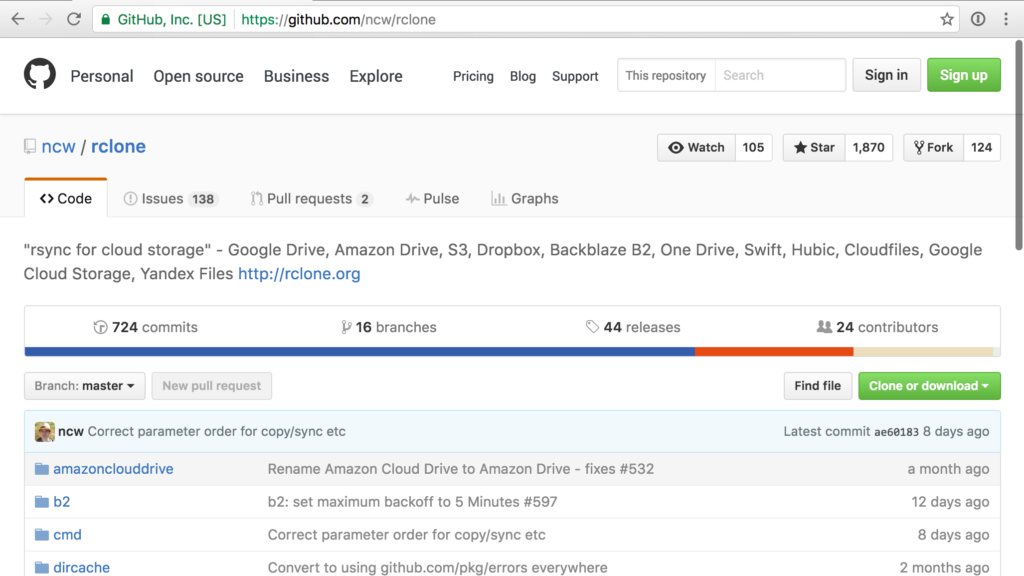

Ever once in a while I find myself in a situation where I need to move a large amount of data between a cloud storage provider and my local computer. Like moving 20GBs of photos to Google or pulling down 40GBs of backups from Amazon S3. This is all made simple with a single command line program called rclone.

The format is fairly straightforward. It goes something like rclone [command] [source] [destination]. The source and destination can be a folder location on your computer or one of the many cloud storage providers rclone supports.

Pulling down GBs from Amazon S3

The following will copy a folder from Amazon S3 to a local folder.

rclone sync Amazon:bucket/folder-name ~/Downloads/folder-name

This is a smart sync. Cloud providers are not always the most reliable when uploading/downloading. If any files are missed it automatically retrys. Also running the same command will only transfer any unchanged files.

Pushing data just as simple

Uploading data to a cloud provider is just a matter of flipping around the options. The following would push the local folder to a Google Drive account.

rclone sync ~/Downloads/folder-name Drive:bucket/folder-name

Before using rclone there is some prep required. You first need to install rclone and then configure a cloud provider. The documentation on rclone’s website is very straightforward.